Projects

2025 – 2027

GermanDialects AI

German Dialects - Document, Preserve and Learn (with the help of AI)

The project aims to document and preserve Upper German dialects, promoting their learning via an AI-enhanced online platform.

2022-09 – 2026-08

Aspects of Noun Phrase Structure in Arabic

This project deals broadly with problems in the analysis of the way in which quantificational material is integrated with non-quantificational material in noun phrases in natural language. It studies these interactions with particular reference to the behavior of the comparative and superlative and quantificational adjectives (‘much/little’), and with particular reference to Arabic, which has cross-linguistically rare properties that shed new light on the these issues.

2025-10 – 2026-03

Studie zum Bundes-LLM

F&E-Dienstleistung zur wissenschaftlichen Vorbereitung eines Bundes-Large-Language-Models (LLM) für die Österreichischen Bundesministerien der Ausschreibung AI Ökosysteme 2025 "AI for Tech & AI for Green"

Das OFAI koordiniert eine F&E-Dienstleistung zur wissenschaftlichen Vorbereitung eines Bundes-Large-Language-Models (LLM), das als souveräne und vertrauenswürdige Grundlage für die digitale Verwaltung und Bürgerdienste dienen soll. Dazu werden mögliche technologische Lösungen aus verschiedenen Perspektiven beleuchtet, wie Daten- und Technologiesouveränität, rechtliche, ethische und ökologische Herausforderungen, Sicherheitsstandards, Prüfsysteme und Governance, sowie Interoperabilität mit bestehenden Verwaltungssystemen, Finanz- und Personalbedarf für Entwicklung und Langzeitbetrieb.

2018 – 2025

Lego Audio and Braille Building Instructions

In a series of co-operation projects with Lego, OFAI has developed technology which allows generating verbal building instructions from abstract representations based on which Lego's visual building instructions are generated. With these verbal instructions that can be accessed via speech or Braille, blind and seeing impaired are being enabled to construct and experience LEGO models on their own.

2024 – 2025

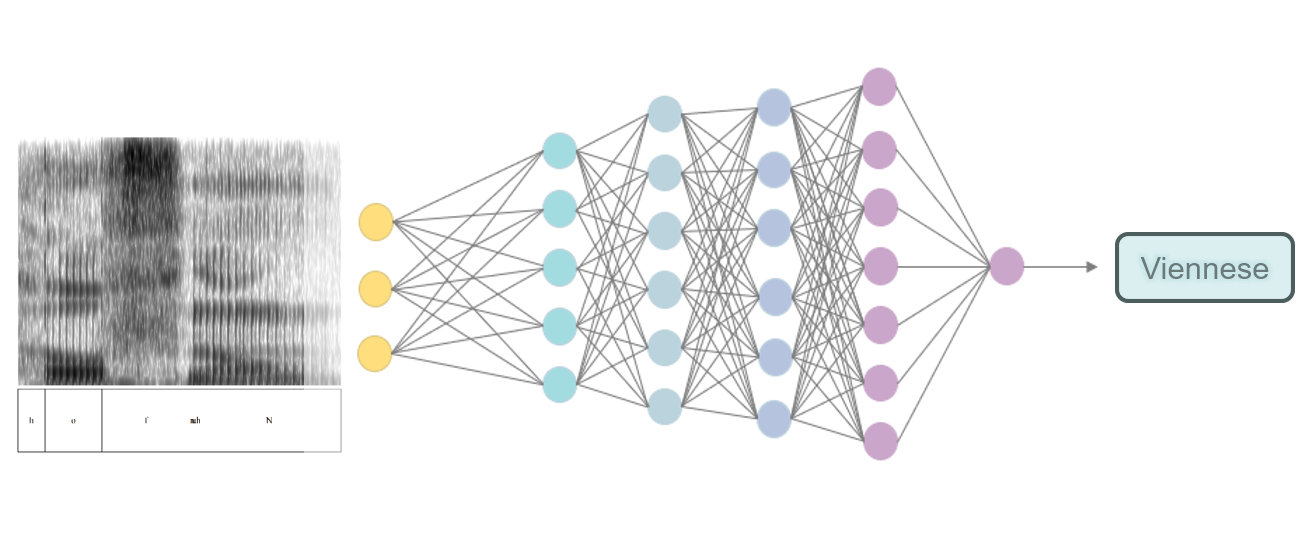

Dicla

Dialect Classification by Human and Artificial Intelligence

The primary objective is to develop an Explainable Artificial Intelligence (XAI) system dedicated to dialect classification.

2022 – 2025

Sipfront

Analysis of automated telco services test results

The project explores the use of AI and advanced data science methods for the in-depth analysis of telco services automated test results.

2021 – 2023

Ekip

A Platform for Ethical AI Applications

The project addresses questions of what it means to develop good and trustworthy AI applications. What are the ethical, legal and technical constraints to take into account and how to develop concrete ethics-by-design principles and implement these in a technical platform aiming for truly explainable and accountable AI? The research project brings together experts from ethics, AI ethics, computer science, AI, natural language processing, and data science.

2020 – 2023

Vokquant

Typology of Vowel and Consonant Quantity in Southern German varieties: Acoustic, Perception, and Articulatory Analyses of Adult and Child Speakers

An extended investigation of the (in)stability of phonemic quantity in vowel plus consonant (VC) sequences in southern German varieties has provided new evidence (1) for the Bavarian VC timing system to be currently changing both in Austria and Germany primarily due to dialect levelling, (2) for the cross-generational stability of VC patterns in Swiss dialects, and (3) for the emergence of aspirated stops in younger speakers of Bavarian and Swiss dialects.

2022 – 2023

DANCR

Embodied AI as a research tool for improvisation dance research

DANCR is an artistic, AI-driven tool. Improvisation is of utter importance for contemporary dance. DANCR uses AI models built on recordings of dance improvisations where a Pepper robot imitates the artist to build models for new and creative human-robot interactions during new live dance szenarios.