INSYNC

Synchronization and Communication in Music Ensembles

Interpersonal communication and the coordination and synchronization of actions are fundamental human capacities. People use these functions routinely in activities such as shaking hands, driving a car, playing sports, or playing music as part of an ensemble. To coordinate your actions with someone else’s, you must be able to predict how the other person is going to behave. Music ensemble performance provides a particularly interesting context for studying prediction and coordination because the synchronization between actions must be so precise. Since music is dynamic, or time-varying, ensemble musicians must make predictions about their co-performers’ behaviour as they play, relying primarily on nonverbal cues provided by their co-performers’ body movements, breathing, and sound. This research project investigates the mechanisms underlying musical synchronization in small ensembles, using a combination of perceptual/performance experiments and computational modelling techniques.

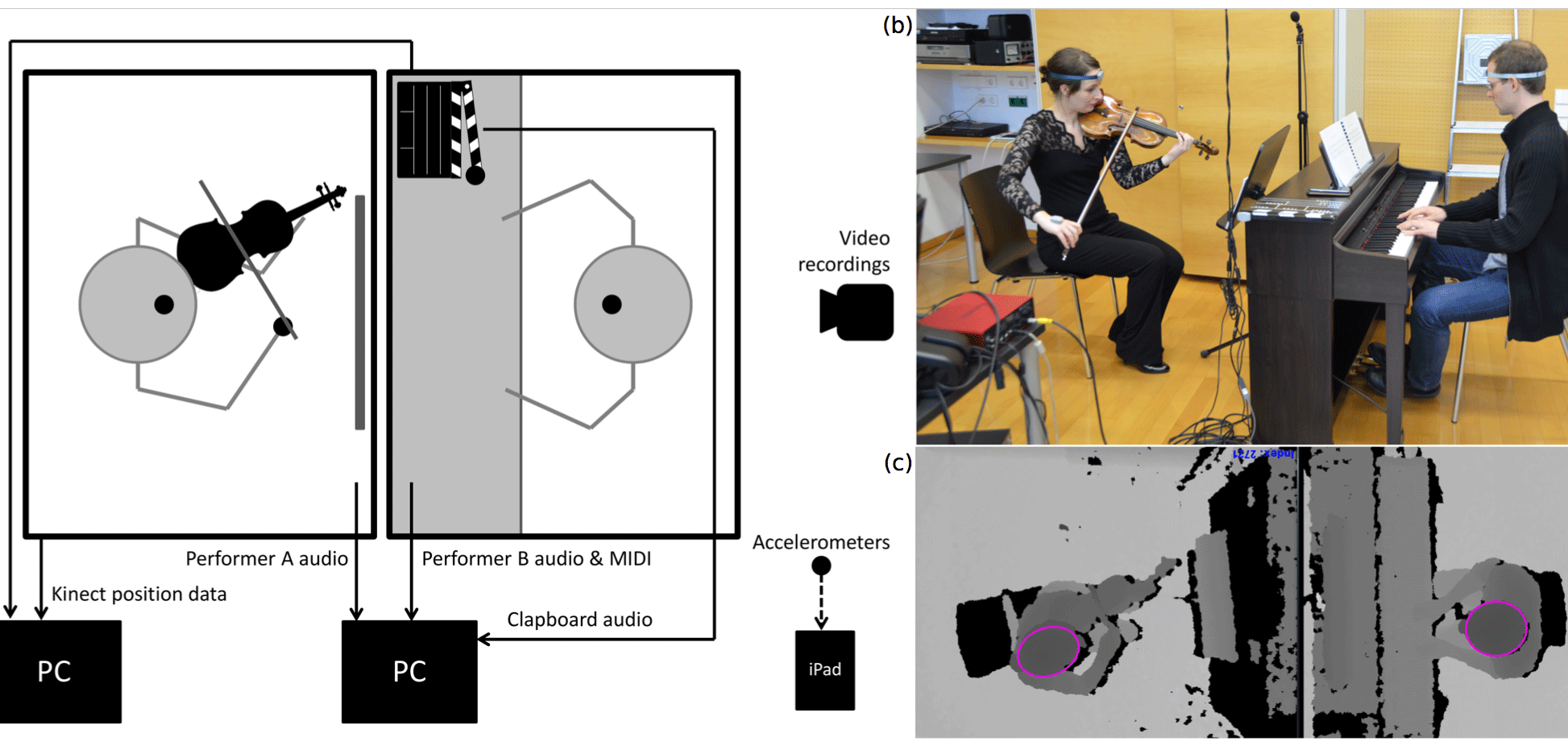

We are also investigating the cueing gestures that ensemble musicians use to communicate with each other. We focus on the gestures they use to cue each other in at the starts of pieces – a point where visual communication is particularly important. Different types of motion sensing equipment (Kinect sensors, inertial movement sensors, and an OptiTrack motion capture system) allow us to track the motion of multiple musicians simultaneously. With these measurements, we can explore the relationships between musicians' cueing gestures, the structural characteristics of the music, and the successful synchronization of sound.

In a recent experiment, we sought to identify the kinematic landmarks in musicians' head movements (i.e. extremes in position, velocity, and acceleration) that communicate beat position. Pairs of pianists and violinists performed short musical passages while leader/follower configurations, performance tempo, and instrument pairing were manipulated. Followers aligned their piece onsets with peaks in leaders' head acceleration, suggesting that acceleration – not spatial trajectory – communicates beat position.

A follow-up experiment investigated how gesture kinematics relate to successful synchronization at piece entrances. Musicians watched previously-recorded piano performances and tapped along with the beat of the music. Their first taps aligned more precisely with recorded piece onsets when the cueing gestures given by the pianists were large in magnitude, high in distinctiveness, and low in jitter. Pianists with conducting or extensive ensemble-playing experience produced higher-quality gestures than did pianists with little ensemble experience.

In this part, we study timing adaptation processes with the help of computational models. Existing timing models for sensori-motor synchronization were implemented and extended to be used in musical contexts. In a comprehensive cross-validation study, these models were evaluated for their ability to track expressively performed music using a substantial piano performance corpus (Golka & Goebl, 2014). These timing models were implemented in a basic artificial accompaniment system that is able to co-perform with a human performer by tracking (and in part predicting) their performance (Golka, 2015).

We have also developed a professional science exhibit “TappingFriend” (Im Takt bleiben), which enables participants to expierience different levels of cooperation during synchronization with a human and a virtual partner (the Maestro).

In this part, we investigated performance parameters (in particular horizontal and vertical ensemble timing) that contribute to the sensation of “groove” in different playing styles (e.g., Swing or Latin). A pilot study with a Jazz trio setting (saxophone, drum, bass, recorded with a multi-track paradigm) was accomplished in cooperation with the University of Georgia (USA) recording combinations of six professional musicians to study synchronization behavior (Hofmann, Wesolowski, & Goebl, 2015; Hofmann, Goebl & Wesolowski, 2016). Additional perception experiments attempted to extract the cognitive and affective responses to groove-based music (Wesolowski, Hofmann, Schultz & Goebl, 2015; Wesolowski & Hofmann, 2015, 2016, PLoS ONE).

Publications

- Bishop, L., and Goebl, W. (2017). Communication for coordination: Gesture kinematics and conventionality affect synchronization success in piano duos. Psychological Research, published online 21 July 2017. doi: 10.1007/s00426-017-0893-3

- Hofmann, A., Wesolowski, B., and Goebl, W. (2017). The tight-interlocked rhythm section: Production and perception of synchronisation in Jazz Trio Performance, Journal of New Music Research, 46(4), 329–341. doi:10.1080/09298215.2017.1355394.

- Bishop, L., and Goebl, W. (2017). Beating Time: How ensemble musicians’ cueing gestures communicate beat position and tempo, Psychology of Music, 46(1), 84–106. doi: 10.1177/0305735617702971

- Wesolowski, B., & Hofmann, A. (2016). There’s more to Groove than bass in electronic dance music: Why some people won’t dance to Techno. PLoS ONE, 11(10), e0163938. doi:10.1371/journal.pone.0163938

- Bishop, L., and Goebl, W. (2015). When they listen and when they watch: Pianists’ use of nonverbal audio and visual cues during duet performance. Musicae Scientiae, 19(1), 84–110. doi:10.1177/1029864915570355.

- Bishop, L. and Goebl, W. (2014). Context-specific effects of musical expertise on audiovisual integration. Frontiers in Psychology | Cognitive Science, 6, 1123, doi:10.3389/fpsyg.2014.01123.

- Goebl, W., and Palmer, C. (2009). Synchronization of timing and motion among performing musicians. Music Perception, 26(5), 427–438, doi: 10.1525/mp.2009.26.5.427.

- Goebl, W. (2014). Translation in Performance Science. In W. Hasitschka (Ed.), Performing Translation. Schnittstellen zwischen Kunst, Pädagogik und Wissenschaft (pp. 357–367). Wien: Löcker-Verlag. ISBN: 978-3-85409-743-3.

- Bishop, L., & Goebl, W. (2016). Music and movement: Musical instruments and performers. In R. Ashley & R. Timmers (Eds.), Routledge Companion to Music Cognition. New York: Routledge. (pp. 349–361).

- Goebl, W. (2016). Geformte Zeit in der Musik. In W. Kautek (Ed.), Zeit in den Wissenschaften (Vol. 19, pp. 179–198). Wien: Böhlau Verlag.

- Bishop, L., & Goebl, W. (2017). Coordinating Piece Entries: How Gesture Kinematics Affect Cue Clarity. Paper to be presented at the 25th Anniversary Conference of the European Society for the Cognitive Sciences of Music, Ghent, Belgium, forthcoming.

- Bishop, L., & Goebl, W. (2016). Coordinating piece entrances: Communication of beat position and tempo through ensemble musicians' cueing gestures. Paper presented at the Jahrestagung der Deutschen Gesellschaft für Musikpsychologie. Vienna, Austria.

- Hofmann, A., Goebl, W., & Wesolowski, B. (2016). Synchronisation im Jazz Ensemble. Poster presented at the Jahrestagung der Deutschen Gesellschaft für Musikpsychologie. Vienna, Austria.

- Arzt, A., Goebl, W., & Widmer, G. (2015). Flexible score following: The Piano Music Companion and beyond. In A. Mayer, V. Chatziioannou, & W. Goebl (Eds.), Proceedings of the 3rd Vienna Talk on Music Acoustics (pp. 220–223), Vienna: Institute of Music Acoustics, University of Music and Performing Arts Vienna. urn:nbn:at:at-ubmw-20151022112731174-1423154-3

- Goebl, W., & Guggenberger, D. (2015). TappingFriend. An interactive science exhibit for experiencing synchronicity with real and artificial partners. In A. Mayer, V. Chatziioannou, & W. Goebl (Eds.), Proceedings of the 3rd Vienna Talk on Music Acoustics (pp. 227–230). Vienna: Institute of Music Acoustics, University of Music and Performing Arts Vienna. urn:nbn:at:at-ubmw-20151022112655691-1444937-3

- Bishop, L., & Goebl, W. (2015). Enabling synchronization: Auditory and visual modes of communication during ensemble performance. In A. Mayer, V. Chatziioannou, & W. Goebl (Eds.), Proceedings of the 3rd Vienna Talk on Music Acoustics (pp. 207). Vienna: Institute of Music Acoustics, University of Music and Performing Arts Vienna.

- Bishop, L., & Goebl, W. (2015). Ready, Set, Go: Mapping the gestures used to cue entrances in duo performance. Paper presented at the International Symposium on Performance Science (ISPS'2015), Kyoto, Japan.

- Golka, G. (2015). Novel software tools for synchronization research. Poster presented at the Rhythm Production and Perception Workshop (RPPW'15), Amsterdam, N.L.

- Hofmann, A., Wesolowski, B., & Goebl, W. (2015). Hi-hat as groove lock in a jazz ensemble? Poster presented at the Rhythm Production and Perception Workshop, Amsterdam, NL.

- Bishop, L. and Goebl, W. (2014). Effects of musical expertise on audiovisual integration: Instrument-specific or generalisable? In Moo Kyoung Song (Ed.), Proceedings of the ICMPC-APSCOM 2014 Joint Conference: 13th International Conference on Music Perception and Cognition and 5th Conference of Asia-Pacific Society for the Cognitive Sciences of Music, College of Music, Yonsei University, Seoul, Korea, p. 64.

- Golka, G. and Goebl, W. (2014). Tracking expressive performances with linear and non-linear timing models. In Moo Kyoung Song (Ed.), Proceedings of the ICMPC-APSCOM 2014 Joint Conference: 13th International Conference on Music Perception and Cognition and 5th Conference of Asia-Pacific Society for the Cognitive Sciences of Music, College of Music, Yonsei University, Seoul, Korea, pp. 164–165.

- Hadjakos, A., Grosshauser, T., and Goebl, W. (2013). Motion analysis of music ensembles with the Kinect. International Conference on New Interfaces for Musical Expression (NIME’13), Advanced Institute of Science and Technology, KAIST, Daejeon, Korea, pp. 106–110.

Gallery

Research staff

- Werner Goebl

- Laura Bishop

- Gerald Golka

- Alex Hofmann

Sponsor

- Duration

2012 to 2016 - Coordinator

OFAI - Sponsor

Austrian Science Fund

- Contact

Robert Trappl